Stiff Person Syndrome: Taxonomic Analysis Supports Use of Large Data Methods to Appraise Major Comorbidities of a Rare Disorder

Background

Stiff-person syndrome (SPS) is a painful, rare, neuro-immunological condition developing in mid-life. Clinically, SPS includes severe spasms, disabling neuromuscular stiffness, anxiety, impaired ambulation, and frequent falls. Described in 1954 as ‘stiff man syndrome’, in 1988, auto-antibodies binding glutamic acid decarboxylase-65 (GAD- 65Abs) were identified in SPS; in 1993 a paraneoplastic variant was associated with amphiphysin auto-antibodies [1-4]. In a clinical appraisal of an early large laboratory-based cohort of SPS patients with high-titer GAD65Ab, we noted markedly elevated auto-antibody levels even after decades of symptoms [5]. In 2008, we described distinctive anatomical patterns of SPS symptoms with GADA65Abs vs. amphiphysin antibodies: GAD65Ab-SPS prominently affects thoraco-lumbar structures and amphiAb-SPS the cervico-thoracic region [6]. The extent to which SPS affects physiological systems more broadly, impacts access to care, and even basic prevalence are not well established. Recently, a natural history study observing patients not receiving immunomodulating therapy noted worsening over time with progression to disability [7]. Care settings, healthcare access, disparities, and utilization have received limited attention [8]. Complexities aside, in conditions as rare as SPS, substantive clinical cohorts are uncommon; as a result, the characterization of SPS, including comorbid conditions, has progressed slowly [9-12].

Even the prevalence of SPS remains subject to some controversy [13] Importantly, GAD65Ab are not unique to SPS, high-titer GAD- 65Ab also associate with cerebellar ataxia, while low-titer GAD65Ab are quite prevalent and associated with type I and adult-onset autoimmune diabetes; clinical syndrome appraisal remains vitally important [9,10] In late 2016, the use of specific ICD-10 codes became mandatory for all Medicare providers, this in concert with wider adoption of electronic health records means that extensive and detailed clinical coding data are now available for analysis [14-16]. The purpose of this study was to use and validate data from CMS databases, nationally- representative of older adults, and inclusive of younger adults with disabling medical conditions, applying large-data analytical methods and case-level validation, to improve understanding of SPS.

Methods

Study Design and Population

This was a cross-sectional study of persons diagnosed with SPS (ICD-10 code G25.82) utilizing two sources of Medicare (CMS) data. Two approaches were utilized because SPS is rare enough that the standard (smaller) data set offered limited statistical power, whereas the larger available data set did not contain important demographic information; internal and external validation assessment supported this approach, see below [17,18]. The two approaches are summarized in Table 1. Phase 1 involved systematic case-count extraction from several data files based on a sample population of over 6 million beneficiaries in the 20% sample of CMS 2016 data (CMS-20) administered by the Chronic Condition Data Warehouse (CCDW) [19]. Phase 2 involved Carrier claim file records for a sample population of about 1.5 million beneficiaries in the 5% sample of CMS 2017 data (CMS-5), accessed under a formal Data Use Agreement administered by the VA (VIReC). Validation was performed on CMS-5 data by comparing those with a primary diagnosis of SPS by neurologists to those diagnosed by others. Medicare provides healthcare coverage to older adults of the U.S. population, including over 99% of the legal residents of the United States who are age 65 years of age or older. CMS files also include those younger than 65 receiving coverage on the basis of disability or diagnosis with “qualifying conditions” like renal failure [20].

Table 1: Comparison of Phase 1 and Phase 2 data sources, research plan development, and hypothesis testing.

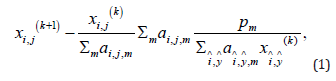

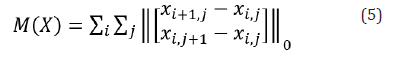

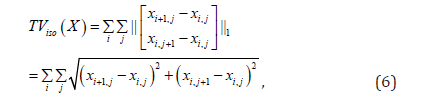

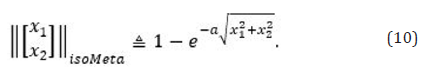

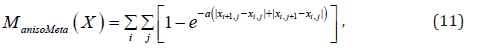

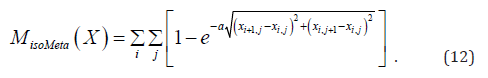

In phase 1, case counts were extracted systematically for each code or group of codes of a priori interest to the study. When two codes are submitted simultaneously, this retrieves the number of beneficiaries coded for either condition. We then constructed concrete Boolean algebra algorithms to calculate the ‘intersection’ of two diagnoses, i.e., the number of patients having both of two specified conditions [21]. Utilizing a system of Boolean equations, we created profiles documenting presence or absence of several conditions simultaneously.

In phase 2, data included individual claims records from CMS-5 including claim-by-claim.

Information: ICD-10 codes (up to 12 per visit), provider data, and selected beneficiary demographics for each fee-based encounter. We defined a ‘coding event’ as code inclusion in a claim for a specific patient. We then utilized SAS and SAS SQL to establish a normalized relational database structure for the data based on beneficiaries as the primary key; associating demographic data and all 2017 ICD-10 coding events.

Identification of Cases

Cases were defined as those beneficiaries in the database with one or more coding events during 2017 specifying G25.82 (SPS). For validation, cases were classified according to the position of SPS as primary or secondary, and whether the claim-filing healthcare provider was a neurologist (NPI primary taxonomy code 2084N0400X) or other.

Non-SPS Population and Confounding Variable Analysis

The non-SPS population for this study was all those in the database not coded for SPS. In phase 1, to ensure an appropriate comparator group, we first needed to construct a condition ‘master list’ that would generate the estimated total ‘active’ beneficiaries in CMS-20. The ‘master list’ codes included common conditions, anticipated SPS comorbidities, diverse neurological conditions, and autoimmune conditions, additional conditions were added until adding another condition did not increase total beneficiaries by >.01%. We also required that the ‘master list’ conditions (minus G25.82) generate a population from which all 409 SPS-coded beneficiaries could be retrieved. The resulting codes are shown in Table 2. Phase 2 ‘active’ presence was defined as having one or more ICD-10 diagnoses in CMS-5.

Table 2: ICD-10 codes utilized in Phase 1 ‘a priori’ search strategy.

Note: Agoraphobia, social phobia, visuospatial, psychomotor, frontal lobe and executive deficits were not coded at significant rates in those diagnosed with SPS.

Covariates

Covariates (e.g., comorbid conditions, clinical features, and symptoms) for Phase 1 were selected a priori, Table 3. The primary purpose of Phase 1 was to examine evidence regarding associations between SPS and known covariates, e.g. spasms; we also included contrast conditions as ‘negative’ controls, e.g. hypertension. A secondary aim of Phase 1 was to appraise the clinical care settings, e.g. inpatients. Search options for ‘setting of care’ were selected in CCDW; Boolean algorithms determined group membership. For Phase 2, no ICD-10 coded conditions were selected a priori as covariates because the purpose was to identify co-diagnoses in an unbiased manner. Conditions that occurred in fewer than 10% of SPS individuals (i.e., n≤5) were excluded because of the unreliability of estimates of small samples with large denominators. As SPS is very rare, race and location data were too sparse for analysis.

Table 3: Case counts, mean age, and standard error of mean age by age and gender groups for SPS-diagnosed beneficiaries in the 5% 2017 Medicare data sample.

Note: SPS patients in this sample totaled 97. Counts, mean age, and standard error are also provided for the total CMS-5 sample for those over 65, and for those SPS patients over age 64 who were diagnosed with SPS as a primary condition by a neurologist (SPS (1°, NEUROLOGY) ≥65). The total sample below age 65 is not provided as this group is not representative of the broader population. Cell size less than 11 is suppress per data use agreement (Supp.).

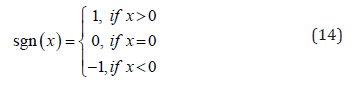

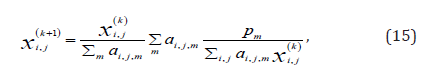

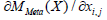

Statistical Methods

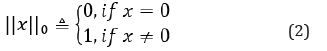

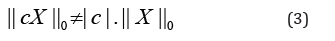

Case counts, proportions, and relative rates were computed and compared using z-test of proportions, p-values, and confidence intervals, statistics were adjusted for planned comparisons with the Bonferroni-Dunn method, yielding 0.05 effective p-value [22]. The study of very rare conditions in a representative sample population has specific features that distinguish the analysis from the standard case-control observational study, these features may mean that applications to rare diseases merit further consideration. Firstly, even when diagnostic certainty is modest, the specificity of the diagnosis is dominated by the very low rate of the diagnosis in the population, i.e., if 50% of those diagnosed with SPS were mis-diagnosed (prevalence 3 per 100,000), specificity approaches 1. Secondly, there are implications regarding sensitivity. Given the marked rarity of SPS, there is negligible risk that the clinical features of the total (comparator) population will be ‘contaminated’ by the features of SPS even if a relatively large percentage of SPS patients are undiagnosed, i.e., low sensitivity, e.g., if 50% of SPS patients (having prevalence of 3 per 100,000) were undiagnosed, and they all experienced muscle spasms, this would only increase the estimate of muscle spasms in the total population by 0.0015%.

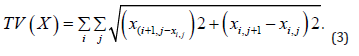

Thus, of these two, validation of the diagnostic certainty (true positives) is the most impactful concern. In Phase 1, we compared SPS and non-SPS groups. Rates were not adjusted due to non-availability of demographic information. In Phase 2 we segmented cases by age. For beneficiaries below age 65, adjusted prevalence was not determinable. For beneficiaries age 65 and over (older adults), we found the age distribution mirrored the U.S. population and adjustment was not required [23]. We compared older adult SPS and non-SPS populations adjusting for multiple comparisons utilizing the false discovery method (FDR) of Benjamini and Hochberg [24]. To balance false detection error rates with false positive rates, FDR was set equal to 0.15. On this basis, 27 clinical codes met criteria. Analysis with a volcano plot indicated that all of the 27 conditions were significant using p < 0.05 criteria [25]. Hierarchical analysis was performed on the common co-morbid diagnoses (all CMS-5 patients, N = 97) using Gower’s distance algorithm in R [26] Comparison of Phase 1 and Phase 2 was performed. Fisher’s exact test was used to compare SPS coding events in CMS-20 and CMS-5 data, no difference was observed (Fisher’s statistic = 0.9106). To appraise use of CMS-20 data despite the inclusion of both younger and older adults we compared the diagnostic phenotypes of older and younger SPS groups in the CMS-5 data, including all conditions present in at least 10% of the total SPS population, N=83, using a T-test and found no difference. Additionally, condition-by-condition testing for difference in proportions was performed, adjusted for multiple comparisons; no single condition was different. Unbiased analysis of the CMS-5 data using the Benjamini-Hochberg false detection method, even with a liberal FDR of 25% did not find any significant differences [24].

Validation by Taxonomic Analysis

NPI numbers in CMS-5 data were cross-walked to primary taxonomy codes of the National Uniform Claim Committee to which SPS diagnostic coding events were initiated by neurologists versus other specialist and general practitioners. The rates of diagnosis with elevated antibody titers, R76.0 and other codes in the R code chapter, i.e. signs, symptoms, and syndromic diagnoses, were assessed.

Standard Protocol Approvals, Registrations, and Patient Consents

This study was performed under a protocol reviewed and approved by the University of Maryland Institutional Review Board.

Results

Case Counts and Prevalence in Older Adults

We identified 409 SPS-coded persons in the 2016 20% Medicare sample (CMS-20). CMS reports 11,963,696 beneficiaries in CMS-20, 84.4% of whom are over age 64; however to reduce potential record- selection bias, i.e., bias arising when comparing a population with coding events to a population that also includes those without coding events,27 we defined the reference population as beneficiaries with one or more ICD-10 coding events, i.e. diagnosis criteria heuristic, Table 1 [27]. The number of reference CMS-20 beneficiaries for 2016 was 6,192,830. In the 2017 5% CMS data sample (CMS-5), we identified 97 SPS-coded persons (SPS); this was consistent with the CMS-20 sample. Mean age for SPS was 61.25 (SE = 1.49), not different in females and males, Table 3. For further study, the CMS-5 population was divided into those age 65 and above (older adults, n=48), and those below age 65 (n=49). CMS-5 contained records from a total of 1,557,061 older adult beneficiaries with one or more coding event; 896,967 females and 660,094 males. For females and males aged 65 and above, The rate of SPS diagnosis by all healthcare providers (diagnostic prevalence, mean +/- 95% C.I.) was 3.01 (+/- 1.38) and 3.18 (+/- 1.66) per 100,000, respectively. Diagnostic prevalence for older adults was not different by gender and overall was 3.08 (+/- 1.06) per 100,000. Age effects on diagnostic prevalence were assessed by segmenting older SPS adults into 5-year age-cohorts, diagnostic prevalence monotonically decreased with age, however the oldest and youngest older adult cohorts were not different, p = .242.

Clinical Features and Settings of Care in Aggregate Data

SPS clinical characteristics were assessed in CMS-20 utilizing an a priori search strategy informed by literature review; settings of healthcare delivery were also assessed. Use of diagnostic codes for neurological signs and symptoms typical of SPS were elevated, Table 4. Several affective and cognitive diagnoses especially anxiety, depression, and mild cognitive impairment were increased, Table 4. Autoimmune diabetes has been closely associated with SPS: Type I diabetes diagnoses, (rate-ratio) 3.23 p<0.001; and unspecified diabetes diagnoses, 2.14 p<0.001; but not type II diabetes diagnoses, 1.00 p=.395 were increased in SPS. Hypothyroidism diagnosis (E03.9) was also increased, 2.83, p<0.001. Regarding the setting of care, SPS diagnosis was coded in the outpatient setting in 194 (47%) of patients, and in an inpatient setting in 82 (20%), 42 (10.2%) patients received both inpatient and outpatient care, half of these did not receive skilled nursing care, home health, or hospice. A small minority of patients, 9 (2.2%), received hospice care, while 38 (9.3%) received home health care; 19 (4.6%) required skilled nursing, similarly for durable medical equipment. 156 (38%) patients were ‘on record’ only, not receiving care in any specified setting. For the non-SPS population, 3,737,224 (60.13%) received outpatient care and 1,026,642 (16.52%) received inpatient care.

Table 4: Phase 1: Symptoms and signs of SPS in in Medicare beneficiaries with SPS.

Note: 2016 20% Medicare data sample, total SPS patients with specified signs and symptoms, unadjusted prevalence in the non-SPS population, (unadjusted) rate ratios, and statistical significance. SPS patients in this sample totaled 409, the total patients with one or more diagnoses per protocol numbered 6,192,830.

Coding Events for Individuals With SPS

In CMS-5, 1189 unique ICD-10 codes were utilized in 48 older adults diagnosed with SPS. Of these, 1075 were ‘diagnostic’, i.e. ICD-10 Chapters A-Y; here 98 were utilized in 5 or more SPS-diagnosed persons, representing 10% of the SPS population, and were included. Of these, 3 were not coded in 5 or more non-SPS beneficiaries and were excluded. Per our data use agreement, cell values below 5 cannot be reported. The number of SPS coding events per beneficiary ranged from 1 to 89, with a median of 3. For 22 patients, G25.82 (SPS) was the most frequently used ICD-10 code during 2017; for nine others, G25.82 was coded ≥10 times during the year, exceeded in frequency only by diagnoses commonly associated with SPS, e.g. abnormalities of gait, or ‘high-utilization’ conditions, e.g. renal failure. We divided the SPS population into tertiles based on number of G25.82 coding events, there were 32 individuals with one G25.82 coding event, another 32 with 2-5 G25.82 coding events, and 33 individuals with 6-89 coding events. The diagnosis rates across all ICD-10 codes were compared using comparison of proportions between tertiles and no significant differences were identified. The smallest P-value identified pertained to diagnosis with R76.0, raised antibody titer, for the top and bottom tertiles, unadjusted P-value = 0.00013. Those diagnosed with raised antibody titers (R76.0) demonstrated diagnoses typically associated with GAD65 antibodies (number): gait abnormalities (9), muscle spasms (8), ataxia (5), and type 1 diabetes (5). For further study, records with single G25.82 coding events were not excluded.

Comparison of Older and Younger Adults with SPS in CMS-5 Data

The CMS-5 data was used in sensitivity analysis of age and sex characteristics as potential confounders of major clinical features in the larger sample (CMS-20) for which demographic data were not available. To do this, we generated diagnostic phenotyping profiles consisting of diagnosis rates for all possible ICD-10 codes, for older and younger SPS-coded populations. We compared the relative diagnosis rates for older and younger SPS populations and found no significant differences. The phenotype profile comparison for older adult SPS-diagnosed and non-SPS population is shown in Figure 1A. This illustrates that SPS has a substantial disease burden in the areas of endocrine, psychiatric, neurologic, cardiopulmonary, and musculoskeletal conditions as well as a marked increase in symptom burden (R series codes).

Unbiased Analysis of Distinctive Clinical Characteristics

To identify clinical characteristics that may not be established a priori, we utilized an unbiased ‘large data’ approach to identify differences between SPS and non-SPS populations in CMS-5. The identified conditions with increased prevalence were defined by calculating prevalence in SPS compared with the non-SPS population followed by a Benjamini-Hochberg false discovery rate adjustment for multiple comparisons, a statistical method widely applied in gene expression analysis [24]. The list of identified conditions is provided in Table 5 with ICD-10 codes and annotation.16 Through this analysis, we observed that those with SPS have higher rates of diagnoses in several categories including auto-immune, endocrine, mental health, neurological, and gastrointestinal disorders, Table 5. Raised antibody titers, gait abnormalities, ataxia, and muscle spasms demonstrated the highest risk ratio for SPS. In addition, SPS patients have high rates of symptom-focused clinical coding events, including weakness, shortness of breath, fatigue, and headache. Identified comorbid conditions included commonly recognized comorbidities such as low back pain, depression, and anxiety, but also included conditions of obstructive sleep apnea, nausea, and dysphagia. Variation in relative rates and statistical significance is efficiently depicted using Volcano plots, shown here in Figure 1B, visually identifying statistically significant rates of diagnosis in SPS compared with non-SPS. Hierarchical analysis of conditions commonly co-diagnosed with SPS was performed and demonstrated that spasms and abnormal gait were the diagnoses most strongly associated with raised antibody titers in SPS, the relationships are illustrated in a dendrogram, Figure 1C.

Table 5: Phase 2: Frequencies and rates for SPS and Total populations from CMS-5 data for conditions identified through unbiased testing. Significance determined utilizing Benjamini-Hochberg method, with P-value for difference of proportions, and risk ratios, sorted by risk ratio from greatest to least. Total population is 1,557,061.

Note: P-values are calculated based on difference in proportions, values were ranked and selected using false-detection protocols [Benjamini, 1995]. Annotation was generated using automated sorting of CDC annotation codes and verified manually [CDC, 2017]. The SPS population includes all those coded with G25.82 in the 5% CMS sample population, see row 1.

Case-By-Case Analysis with Provider Taxonomy

A case-by-case validation was performed in the older adults with SPS (CMS-5 >= 65 y.o.) by linking provider taxonomic classification through an NPI look-up table. We found that 13 of 48 older adults received a coded diagnosis of SPS (G25.82) in the primary position by neurologists; of these 8 were also diagnosed with raised antibody titers (R76.0), Table 6. The age and gender characteristics of those diagnosed with SPS as a primary diagnosis by a neurologist are reported in Table 3. The remainder included 10 older adults with SPS as a primary diagnosis claimed by non-neurologist providers; 20 with SPS as a secondary diagnosis by non-neurologist providers; and 5 others. None of those with either primary or secondary diagnosis of SPS by non-neurologist providers (in the absence of neurological primary or secondary diagnosis) were diagnosed with raised antibody titers, suggesting a strong linkage between neurologist diagnosis of SPS and appraisal of antibody titers.

Table 6: Taxonomy, coding position of SPS diagnosis, and documentation of a raised antibody titer.

Note: 1) Other medical clinicians had taxonomic classifications from family medicine, emergency medicine, acute and critical care medicine, and pulmonary medicine. 2) Other primary diagnoses included G20, Parkinsons disease; G80.1, Spastic diplegic cerebral palsy; M54.17, Lumbosacral radiculopathy; C71.9, Unspecified malignant brain neoplasm; M06.9, Unspecified rheumatoid arthritis.”

Discussion

This study applies multiple approaches to estimate the prevalence of SPS diagnosis and appraise commonly comorbid conditions and symptoms. We observed diagnostic prevalence 3/100,000 in a large, diverse population indicating that SPS is diagnosed infrequently. Using taxonomic analysis, we observed that 13 of 48 older adults diagnosed with SPS had the diagnosis recorded by a neurologist and located in the primary diagnostic position. This would mean that neurological diagnostic prevalence is estimated here to be 8 per million; restricting the diagnosis further to those both diagnosed with SPS by a neurologist as a primary condition and with diagnosed raised antibody titers, would further lower the estimate to 5 per million. Determining the prevalence of a rare condition is challenging and there is potential for both over and under estimation. We acknowledge that many of the providers filing claims with the ICD-10 code for SPS were unlikely to possess expert knowledge of this condition, suggesting possible over-diagnosis. By contrast, SPS is thought to be so rare that many professionals will not recognize it when they see it, suggesting possible under-diagnosis. Galli and colleagues used a stringent approach to identifying database records that had a high degree of probability for SPS.13 Excluding 63 of 78 records identified, they estimated point prevalence for GAD65Ab-associated SPS as 2.06/million.

An important limitation of that study was that the reference population had profound male predominance; if SPS is female predominant, this could bias towards underestimation.27 In addition, that population had a broad age distribution, given that onset of SPS typically occurs in the mid-40s, this would also contribute to under-estimation relevant to the at-risk population. The clinical and research diagnostic challenges pertaining to SPS are substantive; limiting study to increased GAD65Abs per se does not ensure diagnosis of SPS, as patients with type 1 diabetes often have increased GAD65Abs and are far more prevalent and potentially overlapping [3,10]. The prevalence of low-titer GAD65 antibodies is relatively high in the general population, and for these reasons, low-titer levels of antibodies should not be used as pathognomonic of SPS, and caution is warranted. Criteria must recognize uncertainty and inaccuracy in diagnosis, e.g. SPS mimics; the cumulative nature of the coding data result in the persistence of imprecise, tentative, and rejected diagnoses; and possible failure to distinguish GAD-associated SPS from GAD-associated cerebellar ataxia. Knowledge of key SPS features as highlighted in this work may aid clinicians in coding SPS only when appropriate.

This report also provides new Bayesian estimates of comorbid conditions, common symptoms, and neurological signs. We utilized two distinctive approaches to analyzing data applied separately: a traditional a priori approach and an unbiased ‘large data’ approach. The results of these approaches were complimentary and together provide a detailed profile of SPS. We identified increased prevalence of weight loss, weakness, and OSA along with a more comprehensive and detailed characterization of known symptoms and comorbidities such as low back pain, neck pain, headache, depression, anxiety, hypothyroidism, dyspnea, and epilepsy. To some extent, SPS features have been described phenomenologically over several years of clinical study [1-13] By combining directed and unbiased search methodologies we observe that while SPS produces high rates of muscle spasms and pain, especially in the axial musculature, headache was prevalent as well. Neurological signs associated with SPS were confirmed through targeted searching and showed increased rates of abnormal reflexes, meningismus, abnormal gait, and repeated falls. In short, this appraisal of older adults with SPS used population- level Medicare records to construct a clinical portrait that extends prior studies and yield useful detail regarding co-morbid conditions and associated clinical symptoms. The finding that weight loss, OSA, and dysphagia occurred at increased rates in the older SPS patients compared to the unaffected older adults indicates that respiratory and digestive functions may need to be addressed to improve quality of life and avoid compounding morbidity in patients with SPS. These findings are not contrary to known features of SPS: co-contraction of agonist and antagonist muscles is a prominent electrophysiological finding in SPS and a systematic assessment study conducted at NIH found that many exhibited breathing problems and rigidity of the abdominal wall as well as expected lumbar muscle spasms, global stiffness, and frequent falls [5,7,9,11]. Limitations of this study include the extent of data available through records of the Centers for Medicare Services, these are constrained to diagnostic coding, site-ofcare, and demographic features of disease in this study. The nature of International Classification of Disease (ICD) coding data as a research tool has been not without controversy and certainly earlier versions functioned as administrative.14 With the widespread adoption of electronic health records and the engagement of physicians and other clinicians in code development, ICD-10 codes are qualitative different from prior versions [15,16].

Diagnostic coding is now widely integral to clinical thought and practice: used to communicate diagnostic reasoning, qualify patients for tests and treatments, and ensure that practice quality metrics are attained, as envisioned by the World Health Organization [28]. Research methods to learn from this data are advancing [14,26,29]. Nonetheless, ICD coding remains limited by both the skill and engagement of the diagnosing provider and electronic health record factors. Although the sensitivity and specificity of ICD-10 codes for rare and common diseases is critically important to a study of this nature, literature on this topic is limited. The existing literature on U.S. ICD-10 validation is especially sparse because conversion to the ICD-10 system was only finalized in late 2016. Nonetheless, international research literature indicates that although sensitivity is moderate, it is generally in the acceptable range; specificity is almost universally very high, approaching 99% for most conditions [30]. Technically, sensitivity here is very high as a consequence of rare prevalence, it is notable that the positive predictive value of SPS diagnosis may warrant further study. SPS diagnosis remains grounded in clinical discernment of signs and symptoms, augmented by diagnostic testing, e.g., markedly elevated GAD65 antibodies. [5,7,10].

Given the role of elevated GAD65 auto-antibodies in autoimmune SPS, our finding that a minority older adults with ICD-10 coding for SPS were also diagnosed with elevated antibody titer, suggests that raising awareness of antibody testing for SPS and the critical importance of identifying marked elevation in contrast to moderate elevations typical of diabetes, may improve clinical practice.3,5 Most anticipated observations in this study were consistent with prior studies, however one important exception was noted [2,3]. SPS patients have high rates of diabetes but this was not identified by our unbiased Phase 2 search strategy. Close examination of individual claims data led us to observe that multiple distinct diabetes codes were used by providers. This may be a consequence of regional variation in coding as these patients are geographically dispersed. By contrast, weakness is a feature that has not been prominently described previously, but which may be important.

Conclusion

SPS affects adults even in later life and is rarely diagnosed. Unbiased analysis suggests muscle spasms, abnormal gait and raised antibody titers are key features. Clinical features may include obstructive sleep apnea, weakness, and dysphagia. We conclude that improved recognition of core SPS features, and additional study are both needed. In conclusion, stiff-person syndrome is diagnosed in U.S. older adults at a rate of 3 per 100,000 but this is limited to 8 per million for those diagnosed by neurologists as a primary claim diagnosis. SPS is principally a rare neuro-immunological condition associated with severe muscle spasms, disabling gait disturbance, and markedly raised antibodies to GAD65. The syndrome may variably include pain, respiratory and gastrointestinal compromise, depression, anxiety, diabetes, hypothyroidism, weakness, and cognitive changes. Multimorbidity likely contributes to increased hospitalization and patients typically require expert care. Improved recognition of core features will advance more rapid and precise SPS diagnosis, whereas recognition of the broader disease phenotype may further optimize clinical care for this complex disabling and painful condition.

For More Articles: Biomedical Journal Impact Factor: https://biomedres.us